One of the biggest differences between AEO and traditional SEO: once you ship, you can't see a ranking number.

SEO has Google Search Console, Ahrefs, clear ranking digits. AEO doesn't — ChatGPT won't tell you "you got cited 7 times this month", and neither will Perplexity.

You shipped robots.txt, JSON-LD, FAQ Schema, rewrote your copy AI-readable — and then? Did it work? Clients aren't going to email you saying "by the way, ChatGPT mentioned you."

We use a three-layer check: manual ask, bot logs, referral. Quickest to slowest, lightest to hardest.

Layer One: Manually Ask ChatGPT / Perplexity

The simplest. Ten minutes.

Pick three to five questions a customer would ask before buying, and ask each on ChatGPT, Perplexity, Claude, and Gemini. See if your brand, link, or content shows up cited.

The point is asking from the customer's angle — not from your own ("how do I find Heiso?").

A few practical notes:

- Test each model separately: the set of sites ChatGPT cites doesn't match Perplexity's. Perplexity surfaces sources directly, easier to track; ChatGPT requires a follow-up "what's the source?" to reveal them

- Reword the same query differently: "digital transformation consultancy", "AI integration team", "B2B technology consulting" can return wildly different results

- Log it: test once a month into a Google Sheet — only over time can you see the trend

This layer's limit: subjective, unquantifiable, and answers vary each time. But when your site starts getting cited, this layer feels it first.

Layer Two: Bot Traffic Logs

For AI to cite you, it had to crawl you first. Whether it crawled, which bots came — logs tell the truth.

The User-Agents to watch:

GPTBot— OpenAI training crawlerOAI-SearchBot— OpenAI live-search crawler (the one AEO directly affects)PerplexityBot— PerplexityClaudeBot— AnthropicGoogle-Extended— Google Gemini training crawlerBingbot— shared by Bing and Copilot

Three tool tiers to choose from:

- nginx / Apache access logs + grep on User-Agent: most raw, most flexible, you write it yourself

- Cloudflare Security → Bots: free, quick view of bot share, but AI bot classification is coarse

- Dedicated AEO dashboard: classifies AI bots, separates them from human traffic, shows which pages ChatGPT actually fetched

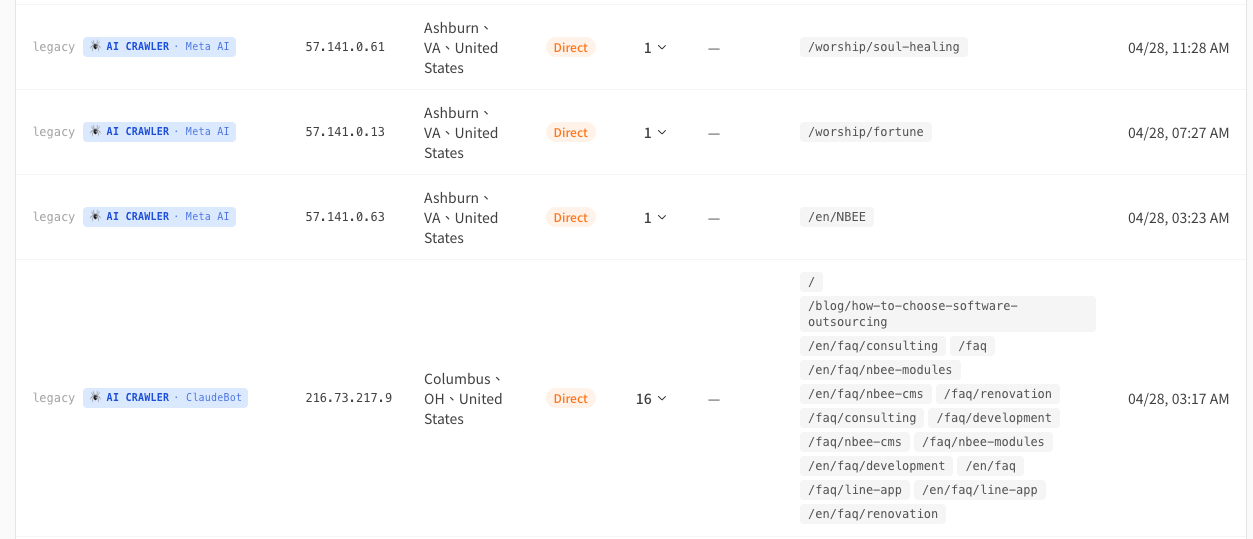

The third one we built ourselves at Heiso, and it ships standard on the B2B sites we deliver — /admin/visits looks like this:

What it does:

- AI crawler classification: auto-detects 15+ types — GPTBot, ChatGPT-User, ClaudeBot, PerplexityBot, Google-Extended, Bingbot, Applebot-Extended — and groups them

- Real-time AI assistant fetches: ChatGPT browse / Microsoft Copilot / Bing Chat hitting from datacenter IPs are recognized too, separated from regular visitors

- What pages each bot has seen: directly verifies "what AI is interested in on your site"

- Human vs bot in two tabs: human traffic stats don't get polluted by bot traffic

We built this dashboard because we hit the "no idea if AEO is working" pain ourselves. It's especially useful for clients in the middle of an AEO push but unsure whether it's landing — for the first time you actually see how interested ChatGPT is in your site, instead of guessing.

This layer measures "how many times AI read you", not "how many times AI cited you". Crawled doesn't mean cited, but no crawl means no citation — traffic here means the infrastructure is past the bar.

Layer Three: AI Referral Traffic

The hardest, slowest, most valuable signal.

When AI not only cites you but surfaces the link and a user clicks through — that visit lands in your Analytics referral report.

Google Analytics, Plausible, custom analytics — any will do. The referrer looks like:

chatgpt.comperplexity.aiclaude.aigemini.google.com

If the past few months were 0, then a month starts at one a week, three a week, ten a month — your AEO is actually working.

A reminder: AI referral volume stays small, but the conversion rate is 5×+ what Google organic delivers. Don't dismiss "only 20 visitors" — those 20 are walking in with a question already in mind.

One Thing to Set Expectations On

You did everything, and don't see results for months — that's normal.

AI model update cycles run in months, and citation ranking mechanisms aren't public. New content getting steady citations usually takes 4–8 weeks — sometimes longer, especially if your brand is "new" to the AI.

Don't quit in week two because "ChatGPT still hasn't mentioned us". The AEO feedback loop runs in weeks to months, not days.

Closing

The SEO era had Search Console telling you your rank; AEO has no central dashboard, but you can build your own — manual asking is the gauge, bot logs are the engine light, referral is the odometer.

Want a hand setting this up, or interpreting what the bot logs are telling you? → Book a consultation